enforcing test-first development with claude code hooks

part of my ai coding workflow.

the problem

i'd ask for one feature. get the feature plus three more. "oh that's not bad, i actually need that." a week later: spaghetti code no one wants to touch.

the problem wasn't the ai. i never forced it to think about tests first.

without that constraint:

- code works until it doesn't

- features drift from spec

- no documentation of expected behavior

- regressions when you touch adjacent code

i wanted claude to literally be unable to proceed without test files in the plan.

the solution

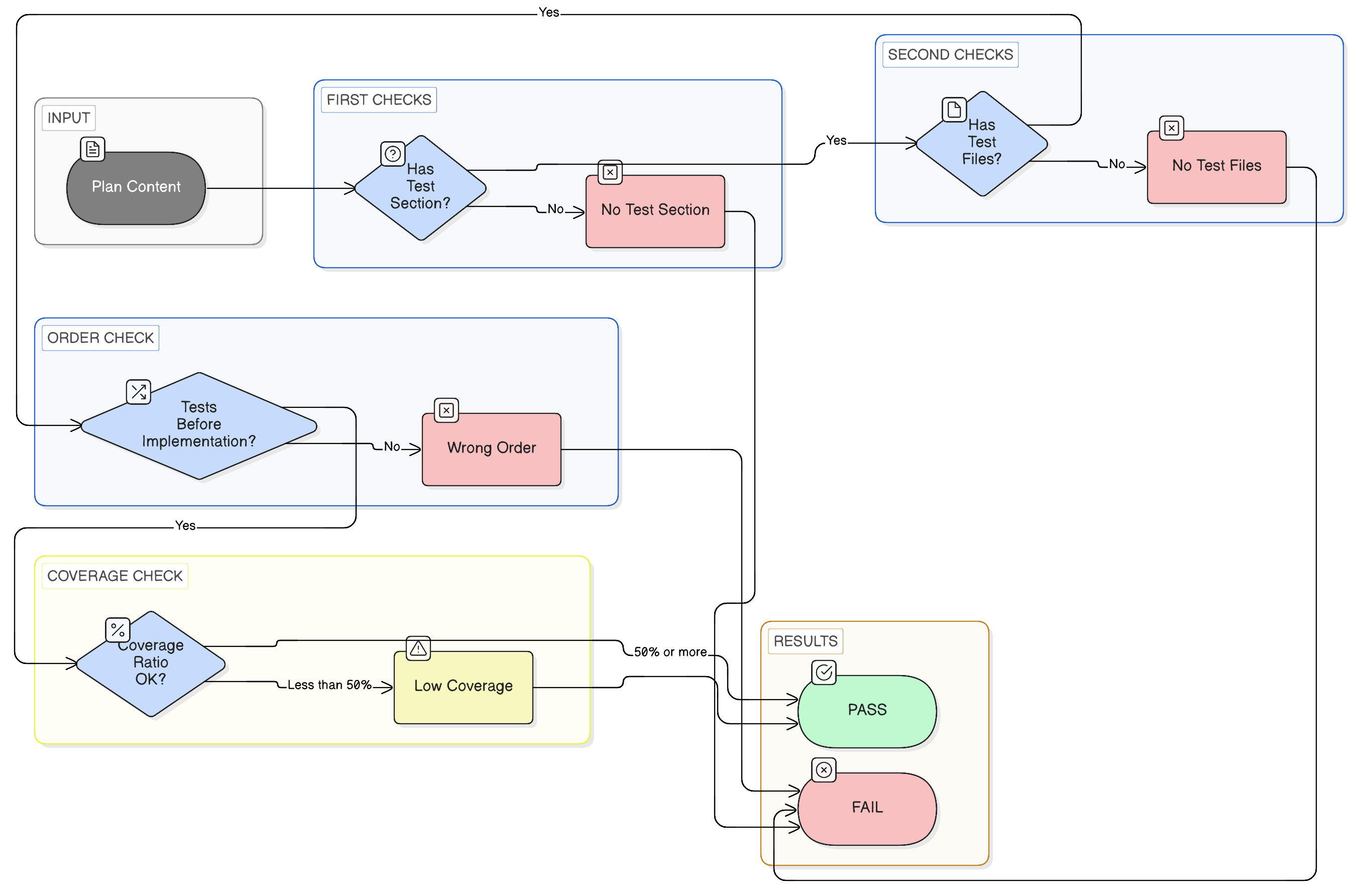

a bash script that:

- scans the plan for test file patterns

- scans for implementation file patterns

- checks that tests appear BEFORE implementation

- returns json with pass/fail and issues

if it fails, the critique hook blocks with exit code 2.

what it checks

test file patterns

the script recognizes:

test_*.py(pytest)*_test.py(pytest)*.test.ts/*.test.tsx(vitest/jest)*.spec.ts/*.spec.tsx(vitest/jest)tests/*.py__tests__/*

implementation file patterns

non-test files in:

src/app/components/lib/services/api/models/utils/helpers/

the script

#!/bin/bash

# Test-First Plan Validator

# Usage: echo "$PLAN_CONTENT" | ./test-first-check.sh

set -euo pipefail

PLAN_CONTENT=$(cat)

HAS_TEST_SECTION=false

HAS_TEST_FILES=false

TESTS_BEFORE_IMPL=false

TEST_FILE_COUNT=0

IMPL_FILE_COUNT=0

FIRST_TEST_LINE=999999

FIRST_IMPL_LINE=999999

ISSUES=()

LINE_NUM=0

while IFS= read -r line; do

((LINE_NUM++)) || true

# test section headers

if echo "$line" | grep -qiE "^#+\s*(test|tests)"; then

HAS_TEST_SECTION=true

fi

# test file patterns

if echo "$line" | grep -qiE "(test_[a-z_]+\.py|[a-z_]+_test\.py|\.test\.(ts|tsx|js|jsx)|\.spec\.(ts|tsx|js|jsx)|tests/[a-z_]+\.py|__tests__/)"; then

HAS_TEST_FILES=true

((TEST_FILE_COUNT++)) || true

if [[ $LINE_NUM -lt $FIRST_TEST_LINE ]]; then

FIRST_TEST_LINE=$LINE_NUM

fi

fi

# implementation file patterns (non-test)

if echo "$line" | grep -qiE "(src/|app/|components/|lib/|services/|api/|models/|utils/|helpers/)[a-z0-9_/-]+\.(py|ts|tsx|js|jsx)" && \

! echo "$line" | grep -qiE "(test_|_test\.|\.test\.|\.spec\.)"; then

((IMPL_FILE_COUNT++)) || true

if [[ $LINE_NUM -lt $FIRST_IMPL_LINE ]]; then

FIRST_IMPL_LINE=$LINE_NUM

fi

fi

done <<< "$PLAN_CONTENT"

# order check

if [[ $FIRST_TEST_LINE -lt $FIRST_IMPL_LINE ]]; then

TESTS_BEFORE_IMPL=true

fi

# build issues

if [[ "$HAS_TEST_SECTION" == false ]]; then

ISSUES+=("No test section found (expected '## Tests' or '## Test Files')")

fi

if [[ "$HAS_TEST_FILES" == false ]]; then

ISSUES+=("No test files listed (expected *_test.py, *.test.ts, etc.)")

fi

if [[ "$HAS_TEST_FILES" == true && "$TESTS_BEFORE_IMPL" == false && $IMPL_FILE_COUNT -gt 0 ]]; then

ISSUES+=("Tests should be listed BEFORE implementation files")

fi

# coverage ratio warning

if [[ $TEST_FILE_COUNT -gt 0 && $IMPL_FILE_COUNT -gt 0 ]]; then

RATIO=$(echo "scale=2; $TEST_FILE_COUNT / $IMPL_FILE_COUNT" | bc)

if (( $(echo "$RATIO < 0.5" | bc -l) )); then

ISSUES+=("Low coverage: $TEST_FILE_COUNT test files for $IMPL_FILE_COUNT impl files")

fi

fi

# pass/fail

PASSED=true

if [[ "$HAS_TEST_FILES" == false ]]; then

PASSED=false

fi

# output json

ISSUES_JSON="[]"

if [[ ${#ISSUES[@]} -gt 0 ]]; then

ISSUES_JSON=$(printf '%s\n' "${ISSUES[@]}" | jq -R . | jq -s .)

fi

cat << EOF

{

"passed": $PASSED,

"hasTestSection": $HAS_TEST_SECTION,

"hasTestFiles": $HAS_TEST_FILES,

"testsBeforeImpl": $TESTS_BEFORE_IMPL,

"testFileCount": $TEST_FILE_COUNT,

"implFileCount": $IMPL_FILE_COUNT,

"issues": $ISSUES_JSON

}

EOF

what a valid plan looks like

the hook forces plans to follow this structure:

## User Story

As a user, I want to see collection creators...

## Acceptance Criteria

- Creator name appears below title

- Links to profile

- Shows "Anonymous" if no creator

## Test Files

### Backend (pytest)

- tests/api/v1/test_collections.py

### Frontend (vitest)

- src/components/__tests__/CollectionHeader.test.tsx

- src/hooks/__tests__/useCreator.test.ts

## Implementation Files

### Backend

- src/app/api/v1/endpoints/collections.py

### Frontend

- src/components/CollectionHeader.tsx

- src/hooks/useCreator.ts

tests first. implementation second. no exceptions.

how it blocks

when the check fails, the main hook outputs to stderr and exits with code 2:

TEST-FIRST VIOLATION - Plan Rejected

Issues:

- No test section found (expected '## Tests' or '## Test Files')

- No test files listed (expected *_test.py, *.test.ts, etc.)

Fix: Add test files BEFORE implementation files in your plan.

claude sees this and has to revise the plan before proceeding.

results

from my debug logs, here's what a revision cycle looks like:

[16:25:02] Test check result: passed: false, testFileCount: 0

[16:25:02] BLOCKING: Test-first check failed

[16:26:15] Test check result: passed: true, testFileCount: 5, implFileCount: 2

[16:26:15] Test-first check PASSED - starting LLM critique

first attempt: no tests, blocked. second attempt: 5 test files, proceeds to critique.

limitations

- doesn't verify test content is meaningful (just that files are listed)

- pattern matching is regex-based, could miss unusual conventions

- doesn't check that test files actually get created during implementation

the vibecheck at the end catches some of these, but it's not perfect.

related

- my ai coding workflow. the full system

- multi-llm plan critique. what happens after test-first passes

the script is ~80 lines of bash. could be cleaner but it does the job.